I Did Not Need Two Tools, I Needed One System

"The thought was always the same. Only the doorway changed."

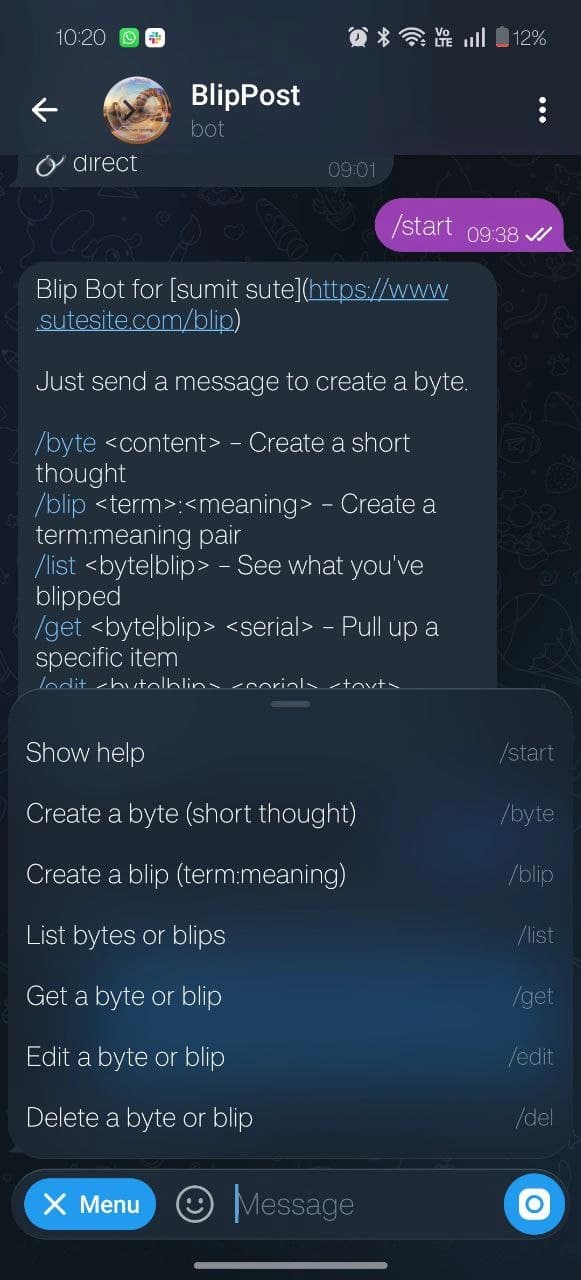

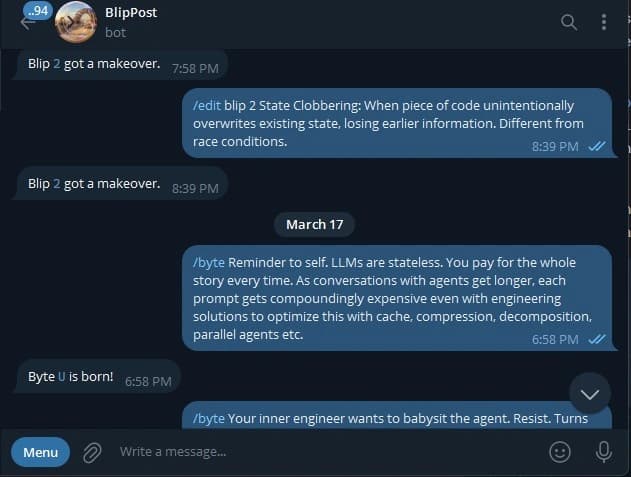

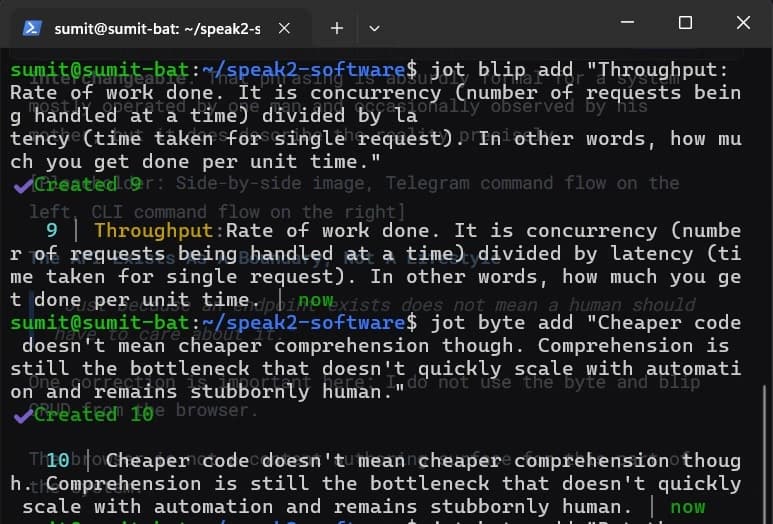

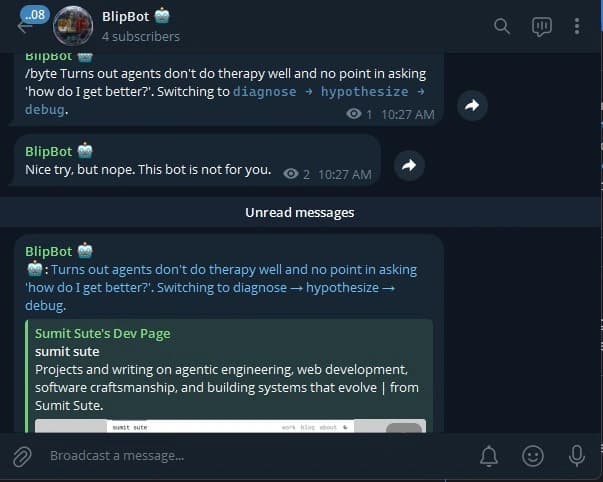

For a while I spoke about the Telegram bot and the CLI as if they were separate accomplishments. One lived on my phone. One lived in the terminal. Both let me create bytes and blips. Both made me feel like I had Built Something. Both were, in architectural terms, slightly full of shit.

The actual problem was simpler.

I wanted one private publishing system for myself.

Not a product. Not a platform. Not a "content ops stack." God help me. Just one system that let me:

- capture a byte, a short thought worth preserving before it evaporates

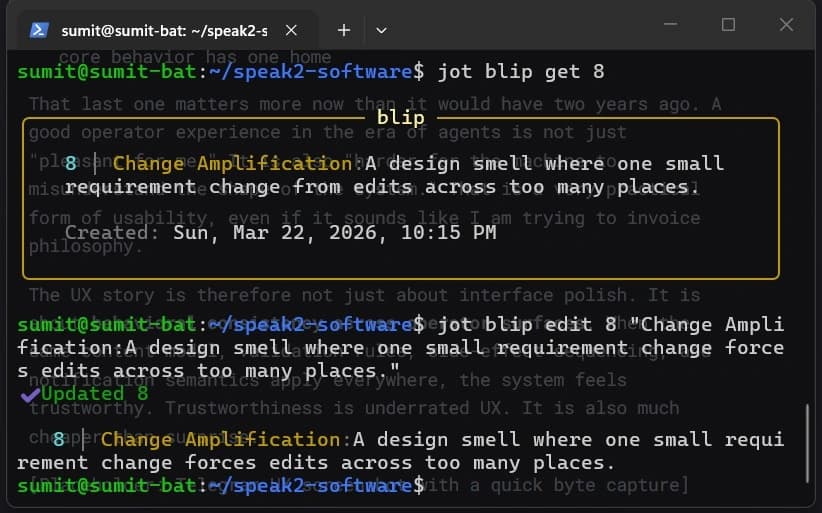

- add or edit a blip, a compact term-and-meaning note for things I want retrievable later

- publish bloqs, the longer pieces where I get to overthink things in public

- keep the public website and public Telegram channel, , in sync

That is a very different framing.

The public-facing part of this system is the website and the Telegram channel. The CRUD side is mostly just me. The readership is small enough that I can still think of it as "publishing" instead of "distribution." My mother and father remain the most reliable recurring users. They did not ask for a service layer. They received one anyway, which is the sort of thing that happens when the only engineer and the only product team are the same mildly obsessive person.

There is also a notification layer wrapped around all of this. New bytes and blips get broadcast to the channel. New bloqs do too. Visitor notifications go back to me. So this is not only a CRUD story. It is also a small publishing-and-notification system trying very hard not to become an accidental distributed systems lecture.

The Interfaces Were Different, The Use-Case Was Not

"A bot and a CLI can look unrelated right up until they start duplicating the same lies."

Telegram and the terminal solve the same problem under different circumstances. That sentence contains the whole architectural move, so it is worth taking seriously before I drown it in diagrams.

Sometimes the nearest keyboard is the one on my phone. Sometimes I am already in a shell. Sometimes the edit is urgent, sometimes the typo is urgent, and sometimes both are urgent because I have once again posted something with the confidence of a person who clearly should have reread it. The system should not care where the correction came from.

That is the point. Not that the interfaces are the same. They are not. Telegram is chat-shaped. The CLI is command-shaped. One smells faintly of messaging app convenience, the other smells faintly of terminal superiority. But the intent model is the same: create, inspect, update, delete, and publish the same underlying content entities.

That is the first important architectural observation: the transport surface differs, the application semantics do not.

The nice phrase for this is multiple operator interfaces over one bounded application core. The useful consequence is that the system can preserve one set of invariants regardless of entry point. If a blip requires a term and a meaning, that should not become a philosophical debate just because I happened to type it in a chat window instead of a shell.

This matters even more in the current era of agentic engineering. An agent can help me implement, refactor, document, and even accidentally vandalize a system much faster than I can on my own. That speed is only an advantage if the system has a clear semantic center. If the rules are smeared across three surfaces, the agent does not see architecture. It sees conflicting clues and picks a side with confidence. Which is also how a raccoon would perform code review if you gave it a linter and emotional momentum.

The Old Shape Was Architecturally Embarrassing

"Every small system eventually reveals whether it was designed or just tolerated."

The old shape had a smell. Not a catastrophic smell. More like the smell of code that technically works but has started to form opinions of its own.

The Telegram path knew how to do CRUD. The API routes knew how to do CRUD. The CLI had its own understanding of the API contract. Notifications were sprinkled around like I had confused orchestration with confetti.

Nothing here was individually outrageous. Together, it meant one behavioral change could require edits in multiple places.

That is how small systems become annoying. Not through scale, but through repetition. You do not need one million users to suffer from bad dependency direction. One exhausted maintainer is enough. Conveniently, I had one on staff, and he was underperforming.

This is also where the article stops being about "clean code" in the decorative sense and starts being about change amplification.

In plain English: one small rule change causes a larger-than-necessary blast radius.

In more senior-developer English: the system had poor locality of behavior, leaky orchestration boundaries, and duplicated application logic across transports. Which is a stylish way of saying the same truth wearing a blazer.

If changing one rule requires touching Telegram, HTTP, notifications, and the CLI contract separately, the system is not merely duplicated. It is multiplying future work. It is also degrading correctness, because every extra edit point is another opportunity for behavioral drift. That kind of architecture looks harmless until an AI agent, a human, or the increasingly indistinguishable hybrid of the two tries to modify it under time pressure and quietly ships three subtly different truths.

The trade-off is obvious in hindsight:

I chose short-term convenience first, because that is how personal tools usually begin. You build the nearest thing that works. You defer structure. You promise yourself future-you will normalize it later. Future-you does normalize it later, but with the posture of a man cleaning up after a party he personally hosted.

The System Needed A Center Of Gravity

"Good architecture is often just the act of giving the logic one home and the excuses none."

The new system has a center of gravity now.

That diagram is the article, honestly. Everything else is me elaborating because apparently I enjoy hearing myself think.

The center of gravity is the application core:

- services orchestrate use-cases

- repositories isolate persistence

- notifier isolates outbound Telegram delivery

- transport layers stay thin

The senior phrase is application core. It describes a very practical rule:

If I change the behavior of createByte, I want one obvious place to do it.

Not one place for HTTP. Not one place for Telegram. Not one place for "technically the same logic but vibes-based." One place.

The senior phrase here is source of behavioral truth. When the rule changes, I should not need an archaeological survey.

This is also the moment where the system stopped being "a bot plus a CLI" and became a small but coherent application. That coherence buys several things at once:

- lower change surface area

- clearer dependency direction

- easier reasoning about side effects

- better support for agent-assisted edits

That last point deserves more than a passing mention. In agentic engineering, structure is part of the interface. An agent does not just consume code, it consumes affordances. If the architecture makes the correct dependency path obvious, the agent tends to follow it. If the architecture offers six half-plausible places to edit, the agent will still pick one. It just may not be the one you wanted. My previous architecture had too many plausible lies in it.

The Core Has A Contract, The Surfaces Have Adapters

"The route is not the use-case. The handler is not the model. The terminal is not a second backend in a funny hat."

Here is the zoomed-in shape:

This is where some useful terminology starts pulling its weight. Terminology is only annoying when it is ornamental. Once it starts helping you make cleaner decisions, it becomes vocabulary instead of cosplay.

The senior phrase is transport adapter.

The Telegram handler and the CLI client are adapters. They translate from the shape a user interacts with into the shape the application core understands. Telegram speaks commands. The CLI speaks arguments and flags. The core should not have to care.

The senior phrase is application service.

The service owns the use-case. Validation, orchestration, and side effects live here. If a byte should be created and then broadcast, this is the place that decides that sequence.

The senior phrase is persistence adapter.

The repository knows Supabase. The service should not know query syntax any more than my mother should know how a GIN index works. Both are happier this way.

The senior phrase is notification adapter.

The notifier owns outbound delivery. Services should not be formatting Telegram HTML at 2 a.m. while pretending that is domain logic. It is not. It is delivery.

This split sounds grand for a personal tool. It is also exactly what makes the personal tool pleasant to live with.

The senior phrase is ports-and-adapters architecture, or if one wants to sound slightly less textbook and slightly more sleep-deprived, it is just refusing to let infrastructure concerns boss around the use-case layer.

The trade-off here is not free. Indirection always costs something. You add files. You add interfaces. You accept that the code is less immediately linear than a single route function with everything stuffed into it. But the return on that indirection is strong once the system has multiple entry points and side effects:

- you can swap infrastructure without rewriting intent

- you can test orchestration without booting the world

- you can extend one surface without smuggling behavior into the wrong layer

- you can hand the codebase to an agent without also handing it a scavenger hunt

It is also what makes the system legible to agents, which is becoming a design concern whether we admit it or not. A well-bounded service layer is not just for human elegance. It gives an agent a smaller semantic search space. Instead of asking it to infer business rules from three transport surfaces and two notification side effects, you hand it a clearer graph:

That is not just abstraction. It is context compression. It lowers the number of places where "what happens if I create a blip?" might be answered differently. In agentic work, that reduction is worth an absurd amount because ambiguity scales faster than model quality.

Telegram Is An Input Surface And An Output Surface, Which Is Rude But Useful

"One app doing two jobs is either elegant or suspicious. Usually both."

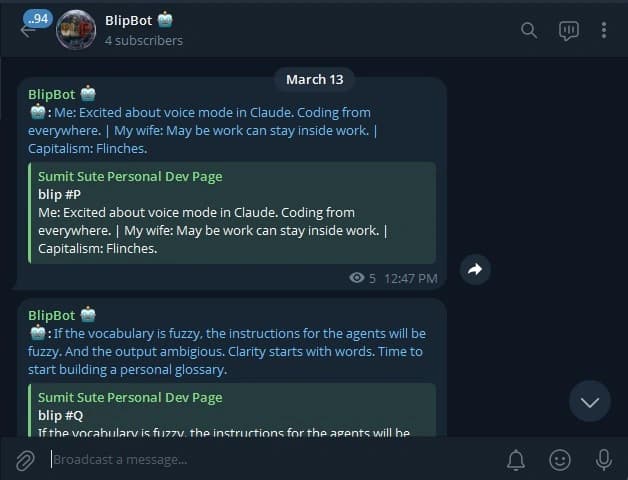

Telegram wears two hats in this system.

First, it is an inbound control surface through the bot. I message the bot. The bot parses commands. The command hits the same application core as everything else.

Second, it is an outbound publishing surface through the channel, . New bytes and blips go out there. Bloq publication notices go out there too. Visitor notifications are more private, so they come back to me directly.

This distinction matters because old code has a habit of blurring "we receive a Telegram message" and "we send a Telegram message" into one big Telegram-shaped blob. That blob then becomes impossible to reason about without emotional support.

The refactor cleaned this up by separating:

- bot logic for inbound command handling

- notifier logic for outbound delivery

Same ecosystem, different responsibilities. Same city, different addresses.

This is a good example of role separation. One integration can still play multiple roles, but the code should not blur those roles together. Otherwise every Telegram-related change starts feeling like touching a wet power strip.

There is also a UX reason. When I message the bot, I am acting as the system operator. When the system posts to the channel, it is acting as the publisher. Same app family, different actor, different audience, different constraints.

The bot is optimized for commandability: parse input, authenticate me, route the intent, confirm the result.

The channel is optimized for legibility: format content, keep broadcasts consistent, make the public output feel like one stream instead of four unrelated side quests.

Those are not interchangeable utilities. They sit on opposite sides of the trust boundary:

That distinction becomes more important, not less, in a world where agents help compose and ship changes. If the outbound broadcaster and the inbound command surface are tangled together, every Telegram change drags more unintended context behind it. The fix is not "be careful." The fix is make the roles explicit in code so care is no longer the main safety mechanism. Care is admirable. It is also notoriously non-deterministic.

The Two Operator Surfaces Are Peers, Not A Hierarchy

"Urgency does not respect interface strategy."

I removed my own temptation to romanticize the split too much.

The CLI is not the "serious" surface and Telegram is not the "casual" one. That would make for a tidy paragraph and a false system model. False clarity is still false, even when it sounds elegant in prose.

I do CRUD from both places. Whichever one is closer wins. Urgency of the edit can strike from anywhere:

- on my phone while outside

- in the terminal while already working

- halfway through rereading something and realizing I have committed a small literary crime

So the right framing is not "different levels of seriousness." The right framing is equivalent operator surfaces with different ergonomics.

That distinction matters because architecture often goes wrong when one interface is treated as the real one and the others become tolerated accessories. Once that happens, the "secondary" surface starts lagging behind in validation, features, or behavior, and soon you have what I can only describe as feature feudalism.

The better approach is to treat both as first-class operator surfaces over the same application core. They are capability-equivalent, ergonomically different, operationally interchangeable. That phrasing is absurdly formal for a system mostly operated by one man and occasionally observed by his mother, but it does describe the reality precisely.

The API Exists As A Boundary, Not A Lifestyle

"Just because an endpoint exists does not mean a human should have to care about it."

One correction is important here: I do not use the byte and blip CRUD from the browser.

The browser is not a content authoring surface for this part of the system.

The CRUD API exists for different reasons:

- it provides a stable transport boundary

- it gives the Telegram and CLI paths one canonical contract

- it keeps automation possible

- it prevents each operator surface from inventing its own local truths

That is what an API boundary is doing here. Not serving a front-end feature. Not chasing platform dreams. Just acting as a contract surface between interfaces and the core behavior.

The senior phrase is stable integration boundary.

The practical meaning is: if I add a rule to blip creation, I do not want Telegram and the CLI to each discover it in their own special, creative way, like two interns solving different homework problems and then both insisting they understood the brief perfectly.

This is also where contract-first thinking starts paying rent. Once the interfaces speak through a stable boundary, you can share types, share typed contracts, and reason about compatibility more explicitly. In an agentic workflow, that matters because agents are excellent at moving quickly through a stable contract and surprisingly creative when the contract is implied but not written down.

There is a trade-off here too. Internal APIs can be overbuilt. Plenty of personal tools die buried beneath their own ceremonial infrastructure. The goal is not to build "platform." The goal is to create a seam where consistency can live. A route contract is useful because it narrows interpretation, not because REST is spiritually enlightening.

The security story lives here too. The bot, the CLI, and the APIs are not interchangeable trust domains, so they should not share a hand-wavy idea of security and call it character development.

The senior phrase is defense in depth. The system is small, but the attack surface is still plural: webhook, CLI, HTTP routes, outbound notifier. Public website, private mutation path. Public channel, private operator bot. Those are different trust zones, and the code should behave like it knows that.

This Is Where SOLID Stopped Being A Textbook And Started Being Housekeeping

"Most design principles become interesting only after they save you from yourself."

I am constitutionally allergic to writing "here is SOLID" as though I am about to unveil a laminated UML diagram and then refuse to leave until everyone applauds polymorphism. But the principles did become useful once the system got real enough to be mildly inconvenient.

Single Responsibility Principle

This matters because each module now changes for one kind of reason.

If Telegram formatting changes, I go to the notifier. If byte validation changes, I go to the service. If storage changes, I go to the repository.

That is not elegance. That is housekeeping. Boring, glorious housekeeping.

It also reduces cognitive load distribution failure, which is a pompous but accurate phrase for "one file is doing too much, so every reader has to do too much too."

Dependency Inversion Principle

The services should not know about concrete infrastructure details.

The senior phrase is dependency inversion. What it bought me here was testability, replaceability, and less coupling. The use-case depends on abstractions, not on whichever library I happened to wire in first while overcaffeinated and slightly resentful.

That is relevant in agentic engineering because concrete infrastructure APIs create sticky context. If a service directly manipulates Supabase queries, Telegram delivery, and transport concerns, an agent changing the use-case now has to reason about all three simultaneously. That is how context windows become suggestion generators instead of engineering tools.

Interface Segregation Principle

Small interfaces are not just academically tidy. They reduce the chance that one module has to understand six things to use one.

The latter is not a real class name in this repo. Yet. I remain vigilant. Mostly because I have met myself.

Open/Closed Principle

The infrastructure can now expand without every use-case needing surgery.

If tomorrow I wanted another output surface, say email or some cursed little desktop widget, the services would not need to relearn what a byte is. They would need a new adapter.

That is the useful version of OCP. Add capability, do not rewrite intent.

Or, less academically: if every new output surface requires surgery across five unrelated files, the architecture is not extensible. It is just stretchy in a medically concerning way.

SOLID is often mocked because people use it like a motivational poster. Fair enough. But in a small system with multiple operator surfaces, the boring version of SOLID is simply a set of pressures toward higher cohesion and lower coupling. Once phrased that way, it stops sounding like doctrine and starts sounding like avoiding a future headache, which is my favorite kind of principle.

This Is Not Enterprise Software, Which Is Exactly Why The Boundaries Matter

"When you are the only operator, bad architecture is not a scaling problem. It is a recurring tax."

This system does not have:

- multiple internal content editors

- role-based workflows

- moderation queues

- product managers asking for dashboard metrics in Figma comments

What it has is:

- one writer

- two private operator surfaces

- one public website

- one public Telegram channel

- a tiny audience

Which is to say, absolutely not enterprise software.

And still, the boundaries matter.

Why?

Because good architecture at small scale is not about horizontal scale. It is about operational sanity.

If the system is small but badly shaped, I still pay for that shape every time I touch it.

That is the important trade-off:

So yes, I gave my personal publishing machine a shared application core, a generated-style client, a notification boundary, and proper trust separation. This is either admirable discipline or evidence that I should go outside more. I am comfortable leaving that ambiguous.

There is also a broader point here about agentic engineering. Small systems used by one person are exactly where sloppy structure is easiest to rationalize:

- "I know how it works."

- "I can just fix it later."

- "The audience is tiny."

All true. Also all excellent ways to build a system that punishes future edits. Agents make that trade-off sharper, not softer. When an agent enters the picture, clarity compounds and ambiguity compounds. A clean boundary helps both human and agent collaboration. A muddy one gives you fast local progress and weird global behavior. Very modern. Very 2026.

This is one of the more interesting reversals of the current moment. Agentic tooling makes architecture feel simultaneously less glamorous and more consequential. Less glamorous, because an agent can brute-force quite a lot of messy code. More consequential, because the quality of the boundaries determines whether those changes compose into a coherent system or a very efficient pile of almost-correct edits.

The User Experience I Actually Wanted

"The architecture is working when the interface disappears before the thought does."

The experience I wanted was boring in the best way.

Same model. Same rules. Same system. Different doorways.

That means:

- I do not have to remember two sets of business rules

- I do not have to worry that one surface broadcasts and the other forgets

- I do not have to treat the bot and the CLI like separate little kingdoms

The ideal user experience for a private content system is not delight. It is absence of friction.

Delight is nice. Frictionlessness is rent.

There is a useful engineering term for this too: operational ergonomics. Not the aesthetics of the interface, but the effort required to complete real work repeatedly without swearing at the architecture.

The good UX outcome here is:

- I do not think about which rules apply in which interface

- I do not mentally translate between two models of the same content

- I do not discover after the fact that one path notifies and another path quietly forgot

- I do not have to babysit agent-generated changes because the core behavior has one home

That last one matters more now than it would have two years ago. A good operator experience in the era of agents is not just "pleasant for me." It is also "harder for the machine to misunderstand the shape of the system." That is a very practical form of usability, even if it sounds like I am trying to invoice philosophy.

The UX story is therefore not just about interface polish. It is about behavioral consistency across operator surfaces. When the same content model, validation rules, side-effect sequencing, and notification semantics apply everywhere, the system feels trustworthy. Trustworthiness is underrated UX. It is also much cheaper than surprise.

The glamorous version of this story is that I designed a multi-surface authoring platform. The honest version is that I got tired of my own tools disagreeing with each other.

The Best Architecture Here Is The One That Gets Out Of My Way

"A private system earns its keep by becoming unremarkable."

I like that the new architecture sounds more serious than the scale of the project strictly requires. That is funny to me. It is also correct.

The system now has:

- two private operator interfaces

- one shared application core

- one persistence boundary

- one outbound notification path

- one public website and one public channel downstream of all that

That sounds neat on a diagram because it is neat on a diagram. But more importantly, it is calmer in practice.

I can message the bot from my phone. I can use the CLI from my terminal. I can publish to the site and the channel without wondering which surface forgot what.

That is the whole win.

Not scale. Not architectural virtuosity. Not the fantasy that I have built some tiny internal developer platform and should now speak at conferences wearing expensive sneakers.

Just one coherent private publishing system, with two operator doorways and a public face.

The refactoring is part of that story, but not the headline. The real headline is that the system now has a cleaner ontology. The responsibilities are more explicit. The dependency graph makes more sense. The entry points are symmetrical. The behavior is less negotiable. In other words, the software is finally acting like it knows what it is.

Which is, frankly, a much nicer arrangement than having three semi-independent republics all claiming to be the Ministry of Thoughts.